You have to call your insurance company in order to change your billing address. You press a series of digits to navigate to a menu where you are prompted to enter your social security number. You have to look up your social security number, and the system times out. You call again with social securiy number in hand, enter a series of digits, enter said social security number incorrectly because you’re flustered, “press two to re-enter”, enter said social security number correctly, listen to elevator music until a half-asleep voice reads from a cheerful script and asks what your social security number is.

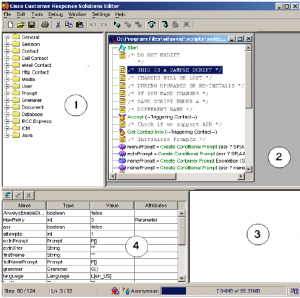

It’s a familiar but painful experience. The system you are dealing with when you’re trying to navigate is called an auto attendant. Cisco makes many of them. They vary in complexity but can be quite intense.

By nature, it’s a difficult process to accommodate. You are trying to navigate a system by audio alone. What people do when navigating something they can see (such as a website or a piece of software or a newspaper) is to scan, and they do so very quickly. People don’t read beginning-to-end. That’s why we have headers in websites and headlines and bylines in newspapers. But when all you have is audio, you can’t scan. You have to wait and listen. A timeline is forced upon you. To make matters worse, the keypad you are using to navigate has actions mapped to it that change with each step. So each step requires a new set of labels, and the only way to do this, of course, is with audio.

Also, there’s a fundamental disconnect between speech and comprehension. People can process spoken text at up to 210 words per minute, but speech is commonly at 150 – 160 words per minute (more on the topic). I’m not sure what the common word-per-minute rate is for auto attendants, but my guess is somewhere in the neighborhood of 100. So what happens when the information is coming too slow and you have nothing to process? You get bored, and your mind starts to drift or you start fiddling with something else to fill the space. Then you miss the next audio prompt, and you have to repeat a step.

An interesting comparison is screen readers (used by blind or vision-impaired people to read computer screens). The default speech rate for screen readers is much faster than typical speech. The voice reads in a robotic monotone that is more or less incomprehensible to a new user but familiar to someone who uses this software every day. Users can tab through each element on a page using the keyboard, so the control is back in the user’s hands. It’s almost like scanning visually, but perhaps more like listening to a CD – the user can go forward or back, but only in a linear order. And in designing accessible websites, one key requirement is to include an invisible link that lets people using screen readers skip “repetitive navigation”, so they don’t have to hear each item of a navigation menu again upon arriving at a new page.

So some improvements could be made for these auto attendants. Perhaps if the systems lived not on the receiving end at the insurance company you are calling, but on your phone – it would allow you to customize the software in order to fit your needs. You could set the speech rate to what you want, and if your vision is good enough and your phone is so-equipped, you could use the text display or caller-ID area of your phone to display a menu for each button (rather than having to listen to it). You could navigate forward and back with buttons that are consistent – no matter where you are calling.

One incredibly easy improvement to existing systems though would be to not ask for the same information twice. For example, if an employee answering the phone is going to ask you for your social security number, it’s pointless and annoying for the auto attendant to ask for it beforehand. Why does this redundancy happen? Maybe the person who configures the auto attendant doesn’t update it frequently enough and the rest of the company has changed their workflow without adjusting the auto attendant. Maybe some of the operators answering the phones are able to see the data you entered on a monitor, but some are not and they have to ask you for it. Maybe it’s a sneaky method of filtering callers out so that there are fewer calls to answer. Assuming the company wants to make this experience less painful, making the auto attendant software simpler and easier to update may mean the difference between disgruntled customers and satisfied ones.